BACD July 2012 : The Xen Cloud Platform

- 1. The Xen Cloud Platform Mike McClurg Xen Cloud Platform Project Lead [email protected]

- 2. A Brief History of Xen in the Cloud Late 90s XenoServer Project (Cambridge Univ.) Global Public Computing The XenoServer project is building “This dissertation proposes a new distributed computing public infrastructure for wide-area paradigm, termed global public computing, which allows distributed computing. any user to run any code anywhere. Such platforms price We envisage a world in which XenoServer computing resources, and ultimately charge users for execution platforms will be scattered across resources consumed.“ the globe and available for any member of Evangelos Kotsovinos, PhD dissertation, 2004 the public to submit code for execution.

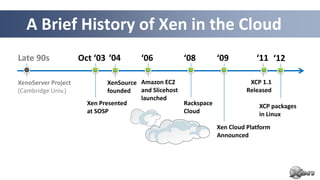

- 3. A Brief History of Xen in the Cloud Late 90s Oct ‘03 ‘04 ‘06 ‘08 ‘09 ‘11 ‘12 XenoServer Project XenSource Amazon EC2 XCP 1.1 (Cambridge Univ.) founded and Slicehost Released launched Xen Presented Rackspace XCP packages at SOSP Cloud in Linux Xen Cloud Platform Announced

- 4. The Xen Hypervisor was designed for the Cloud straight from the outset!

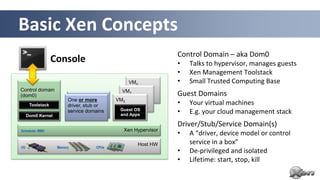

- 5. Basic Xen Concepts Control Domain – aka Dom0 Console • Talks to hypervisor, manages guests • Xen Management Toolstack VMn • Small Trusted Computing Base Control domain VM1 (dom0) Guest Domains One or more VM0 Toolstack driver, stub or • Your virtual machines Dom0 Kernel service domains Guest OS and Apps • E.g. your cloud management stack Driver/Stub/Service Domain(s) Scheduler, MMU Xen Hypervisor • A “driver, device model or control Host HW service in a box” I/O Memory CPUs • De-privileged and isolated • Lifetime: start, stop, kill 7

- 6. Xen Variants for Server & Cloud Xen Hypervisor XCP Toolstack / Console Default / XL (XM) Libvirt / VIRSH XAPI / XE Get Binaries from … Linux Distros Linux Distros Debian & Ubuntu XCP from Xen.org Products Oracle VM Huawei UVP Citrix XenServer Many Used by … Others 8

- 7. XCP: The Xen Cloud Platform

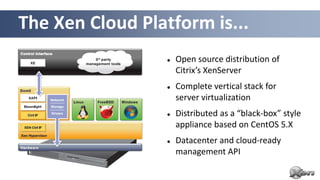

- 8. The Xen Cloud Platform is... Open source distribution of Citrix’s XenServer Complete vertical stack for server virtualization Distributed as a “black-box” style appliance based on CentOS 5.X Datacenter and cloud-ready management API

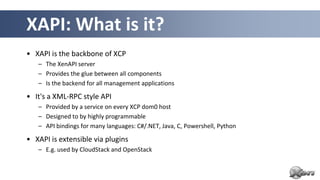

- 9. XAPI: What is it? • XAPI is the backbone of XCP – The XenAPI server – Provides the glue between all components – Is the backend for all management applications • It's a XML-RPC style API – Provided by a service on every XCP dom0 host – Designed to by highly programmable – API bindings for many languages: C#/.NET, Java, C, Powershell, Python • XAPI is extensible via plugins – E.g. used by CloudStack and OpenStack

- 10. XCP Feature Overview • VM lifecycle: live snapshots, checkpoint, migration • Resource pools: flexible storage and networking • Event tracking: progress, notification • Upgrade and patching capabilities • Real-time performance monitoring and alerting • Built-in support and templates for Windows and Linux guests • Paravirtualized drivers optimized for Windows VMs • OpenFlow support with Open vSwitch built-in

- 11. XAPI Management Options • XAPI frontend command line tool: xe (tab-completable, scriptable) • Desktop GUIs o Citrix XenCenter (Windows-only) o OpenXenManager (open source cross-platform XenCenter clone) • Web interfaces o Xen VNC Proxy (XVP) o XenWebManager (web-based clone of OpenXenManager) • XCP Ecosystem: o xen.org/community/vendors/XCPProjectsPage.html o xen.org/community/vendors/XCPProductsPage.html

- 12. XCP and Cloud Orchestration Stacks

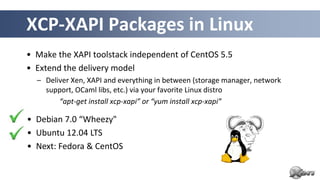

- 14. XCP-XAPI Packages in Linux • Make the XAPI toolstack independent of CentOS 5.5 • Extend the delivery model – Deliver Xen, XAPI and everything in between (storage manager, network support, OCaml libs, etc.) via your favorite Linux distro “apt-get install xcp-xapi” or “yum install xcp-xapi” • Debian 7.0 “Wheezy" • Ubuntu 12.04 LTS • Next: Fedora & CentOS

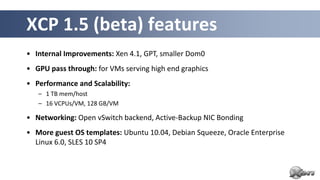

- 15. XCP 1.5 (beta) features • Internal Improvements: Xen 4.1, GPT, smaller Dom0 • GPU pass through: for VMs serving high end graphics • Performance and Scalability: – 1 TB mem/host – 16 VCPUs/VM, 128 GB/VM • Networking: Open vSwitch backend, Active-Backup NIC Bonding • More guest OS templates: Ubuntu 10.04, Debian Squeeze, Oracle Enterprise Linux 6.0, SLES 10 SP4

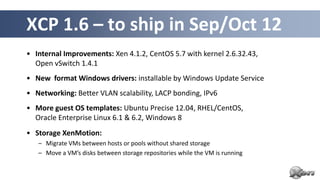

- 16. XCP 1.6 – to ship in Sep/Oct 12 • Internal Improvements: Xen 4.1.2, CentOS 5.7 with kernel 2.6.32.43, Open vSwitch 1.4.1 • New format Windows drivers: installable by Windows Update Service • Networking: Better VLAN scalability, LACP bonding, IPv6 • More guest OS templates: Ubuntu Precise 12.04, RHEL/CentOS, Oracle Enterprise Linux 6.1 & 6.2, Windows 8 • Storage XenMotion: – Migrate VMs between hosts or pools without shared storage – Move a VM’s disks between storage repositories while the VM is running

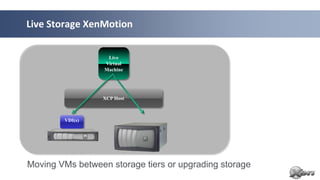

- 17. Storage XenMotion in pictures

- 18. Live Storage XenMotion Live Virtual Machine XCP Host VDI(s) Moving VMs between storage tiers or upgrading storage

- 19. Live Storage XenMotion Live Virtual Machine XenServer Hypervisor XenServer Hypervisor XenServer Hypervisor XenServer Hypervisor XCP Host XCP Host VDI(s) Local Local Storage Storage XCP Pool 1 XCP Pool 2 Moving or rebalancing VMs between Pools (Local Local)

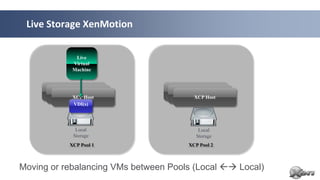

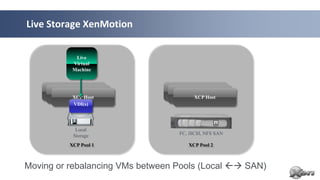

- 20. Live Storage XenMotion Live Virtual Machine XenServer Hypervisor XenServer Hypervisor XenServer Hypervisor XenServer Hypervisor XCP Host XCP Host VDI(s) Local Storage FC, iSCSI, NFS SAN XCP Pool 1 XCP Pool 2 Moving or rebalancing VMs between Pools (Local SAN)

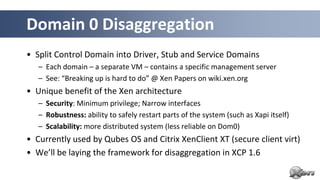

- 22. Domain 0 Disaggregation • Split Control Domain into Driver, Stub and Service Domains – Each domain – a separate VM – contains a specific management server – See: “Breaking up is hard to do” @ Xen Papers on wiki.xen.org • Unique benefit of the Xen architecture – Security: Minimum privilege; Narrow interfaces – Robustness: ability to safely restart parts of the system (such as Xapi itself) – Scalability: more distributed system (less reliable on Dom0) • Currently used by Qubes OS and Citrix XenClient XT (secure client virt) • We’ll be laying the framework for disaggregation in XCP 1.6

- 23. • IRC: #xen-api on Freenode • Mailing List: [email protected] • Wiki: http://wiki.xen.org – Beginners & User Categories – XCP Category • Excellent XCP Tutorials – A day worth of material @ http://xen.org/community/xenday11 Questions… Slides available under CC-BY-SA 3.0 Modified from www.slideshare.net/xen_com_mgr

Editor's Notes

- XenoServer : enablers as well the concept

- Note: 10th birthday of the project is coming up

- Hold this thought! We will come back to this later….!

- PVOPS is the Kernel Infrastructure to run a PV Hypervisor on top of Linux

- Dom 0:In a typical Xen set-up Dom0 contains a smorgasboard of functionality:System bootDevice emulation & multiplexingAdministrative toolstackDrivers (e.g. Storage & Network)Etc.LARGE TCB – BUT, Smaller as in a Type 2 hypervisorDriver/Stub/Service Domains: also known as Disaggregation

- PVOPS is the Kernel Infrastructure to run a PV Hypervisor on top of Linux

- Device Model emulated in QEMUModels for newer devices are much faster, but for now PV is even faster

- Automatic PerformancePV on HVM guests are very close to PV guests in benchmarks that favour PV MMUsPV on HVM guests are far ahead of PV guests in benchmarks that favour nested paging

- PVOPS is the Kernel Infrastructure to run a PV Hypervisor on top of Linux

- Where are we?1) Linux 3 contains everything needed to run Xen on a Vanilla Kernel, both as Dom0 and DomU2) That’s of course a little bit of an old hat now3) But it is worth mentioning that it only took 5 years to upstream that PVOPS into the kernel

- Just one example of a survey, many morehttp://www.colt.net/cio-research/z2-cloud-2.htmlAccording to many surveys, security is actually the main reason which makes or breaks cloud adoptionBetter security means more adoptionConcerns about security means slowed adoption

- So for a hypervisor, as Xen which is powering 80% of the public cloud – rackspace, AWS and many other VPS providers use Xen and with cloud computing becoming mainstream, furthering security is really importantOne of the key things there is isolation between VMs, but also simplicity as I pointed out earlierBut there are also a number of advanced features in Xen, which are not that widely know. So I wanted to give you a short overview of two of them

- Ask some questions

- Example: XOARSelf-destructing VMs (destroyed after initialization): PCIBack = virtualize access to PCI Bus configRestartable VMs (periodic restarts): NetBack (Physical network driver exposed to guest) = restarted on timerBuilder (instantiate other VMs) = Restarted on each request

- What about domain 0 itself?Once we've disaggregated domain 0, what will be left? The answer is: very little! We'll still have the logic for booting the host, for starting and stopping VMs, and for deciding which VM should control which piece of hardware... but that's about it. At this point domain 0 could be considered as a small "embedded" system, like a home NAT box or router.

- Note: not exactly 1:1 with XEComparisons to other APIs in the virtualization space (source: Steven Maresca)Generally speaking XAPI is well-designed and well-executedXAPI makes it pleasantly easy to achieve quick productivityXAPI is set up to work with frameworkssuch as CloudStack and OpenStack. Some SOAPy lovers of big XML envelopes and WSDLs scoff at XML-RPC, but it certainly gets the job done with few complaintsExample codehttp://bazaar.launchpad.net/~nova-core/nova/github/files/head:/plugins/xenserver/xenapi/etc/xapi.d/plugins/ https://github.com/xen-org/xen-api/blob/master/scripts/examples/python/XenAPIPlugin.py

- VM lifecycle (start, stop, resume) ... automation is the key pointLive snapshots: Takes a snapshot of a live VM (e.g. for disaster recovery or migration)Resource pools (multiple physical machines): XS & XCP onlylive migration: VM is backed up while running, onto shared storage (e.g. NFS) in a pool and when completed restarted elsewhere in that pool. disaster recovery: you can find lots of information on how this works at http://support.citrix.com/servlet/KbServlet/download/17141-102-19301/XenServer_Pool_Replication_-_Disaster_Recovery.pdf (the key point is that I can back up the metadata for the entire VM)Flexible storage: XAPI does hide details for storage and networkingI.e. I apply generic commands (NFS, NETAPP, iSCSI ... once its created they all appear the same) from XAPI. I only need to know the storage type when I create storage and network objects (OOL)Upgrading a host to a later version of XCP (all my configs and VMs stay the same) …and patching (broken now - bug, can apply security patches to XCP/XS or Dom0 but not DomU)

- * Host Architectural Improvements. XCP 1.5 now runs on the Xen 4.1 hypervisor, provides GPT (new partition table type) support and a smaller, more scalable Dom0. * GPU Pass-Through. Enables a physical GPU to be assigned to a VM providing high-end graphics. * Increased Performance and Scale. Supported limits have been increased to 1 TB memory for XCP hosts, and up to16 virtual processors and 128 GB virtual memory for VMs. Improved XCP Tools with smaller footprint. * Networking Improvements. Open vSwitch is now the default networking stack in XCP 1.5 and now provides formal support for Active-Backup NIC bonding. * Enhanced Guest OS Support. Support for Ubuntu 10.04 (32/64-bit).Updated support for Debian Squeeze 6.0 64-bit, Oracle Enterprise Linux6.0 (32/64-bit) and SLES 10 SP4 (32/64-bit). Experimental VM templates for CentOS 6.0 (32/64-bit), Ubuntu 10.10 (32/64-bit) and Solaris 10. * Virtual Appliance Support (vApp). Ability to create multi-VM and boot sequenced virtual appliances (vApps) that integrate with Integrated Site Recovery and High Availability. vApps can be easily imported and exported using the Open Virtualization Format (OVF) standard.

- * Host Architectural Improvements. XCP 1.5 now runs on the Xen 4.1 hypervisor, provides GPT (new partition table type) support and a smaller, more scalable Dom0. * GPU Pass-Through. Enables a physical GPU to be assigned to a VM providing high-end graphics. * Increased Performance and Scale. Supported limits have been increased to 1 TB memory for XCP hosts, and up to16 virtual processors and 128 GB virtual memory for VMs. Improved XCP Tools with smaller footprint. * Networking Improvements. Open vSwitch is now the default networking stack in XCP 1.5 and now provides formal support for Active-Backup NIC bonding. * Enhanced Guest OS Support. Support for Ubuntu 10.04 (32/64-bit).Updated support for Debian Squeeze 6.0 64-bit, Oracle Enterprise Linux6.0 (32/64-bit) and SLES 10 SP4 (32/64-bit). Experimental VM templates for CentOS 6.0 (32/64-bit), Ubuntu 10.10 (32/64-bit) and Solaris 10. * Virtual Appliance Support (vApp). Ability to create multi-VM and boot sequenced virtual appliances (vApps) that integrate with Integrated Site Recovery and High Availability. vApps can be easily imported and exported using the Open Virtualization Format (OVF) standard.

- Example: XOARSelf-destructing VMs (destroyed after initialization): PCIBack = virtualize access to PCI Bus configRestartable VMs (periodic restarts): NetBack (Physical network driver exposed to guest) = restarted on timerBuilder (instantiate other VMs) = Restarted on each request

- Hold this thought! We will come back to this later….!

- Performance : similar to other hypervisorsMaturity: Tried & Tested, Most Problems that are Problems are well knownOpen source: Good body of Knowledge, Tools