Open Source Insights

Google has been working on software supply-chain security for many years, and transitive dependencies remain one of the most complex and least understood aspects. While we will be integrating this data into our Cloud and internal products in a variety of ways, we believe there is an immediate value in helping developers understand and visualize dependencies. Today, we are excited to share an exploratory visualization site: Open Source Insights, which provides an interactive view of the dependencies of open source projects.Software development practices have evolved significantly over the last few years. Collaborative development with distributed feature development, consumption of open source and third-party packages, and publicly maintained software libraries have become commonplace, partly as a result of the widespread use of open source software. The advantages of open source are so clear that people and companies that would once have rejected OSS are now adopting it as a critical element of their environment.

But there are challenges brought by OSS too. The pace of change is electric, and it can be hard to keep up. The software packages that a large project depends on might update too frequently to keep a clear picture of what is happening. And those packages, in turn, can change their dependencies to provide new features or fix bugs. Security problems and other issues can arise unexpectedly in your project as a result, and the scale of the problem can make it all difficult to manage. Even a modest OSS project might depend on hundreds of packages.

There are tools to help, of course: vulnerability scanners and dependency audits that can help identify when a package is exposed to a vulnerability. But it can still be difficult to visualize the big picture, to understand what you depend on, and what that implies.

Open Source Insights provides a visualization of a project’s dependencies and their properties. Our exploratory website can be used to get an overview of how a particular software package is put together. Among other features, it provides interactive tools to visualize and analyze full, transitive dependency graphs. It also has a comparison tool to highlight how different versions of a package might affect your dependencies, perhaps by changing their own dependencies, adding licensing requirements, or fixing security problems.

Dependency graph for express 4.17.1

Open Source Insights shows you all this information about a package without asking you to install the package first. You can see instantly what installing a package—or an updated version—might mean for your project, how popular it is, find links to source code and other information, and then decide whether it should be installed. Insights also helps you see the importance of your project by showing the projects that depend on it: its dependents. Even a small project is important if a large number of other projects depend on it, either directly or through transitive dependencies.

Open Source Insights continuously scans millions of projects in the open source software ecosystem, gathering information about packages, including licensing, ownership, security issues, and other metadata such as download counts, popularity signals, and OpenSSF Scorecards. It then constructs a full dependency graph—transitively tracking dependencies, dependencies' dependencies, and so on—and incorporates the metadata, then publishes it so you can see how it all might affect your software. And the information it provides is continually updated.

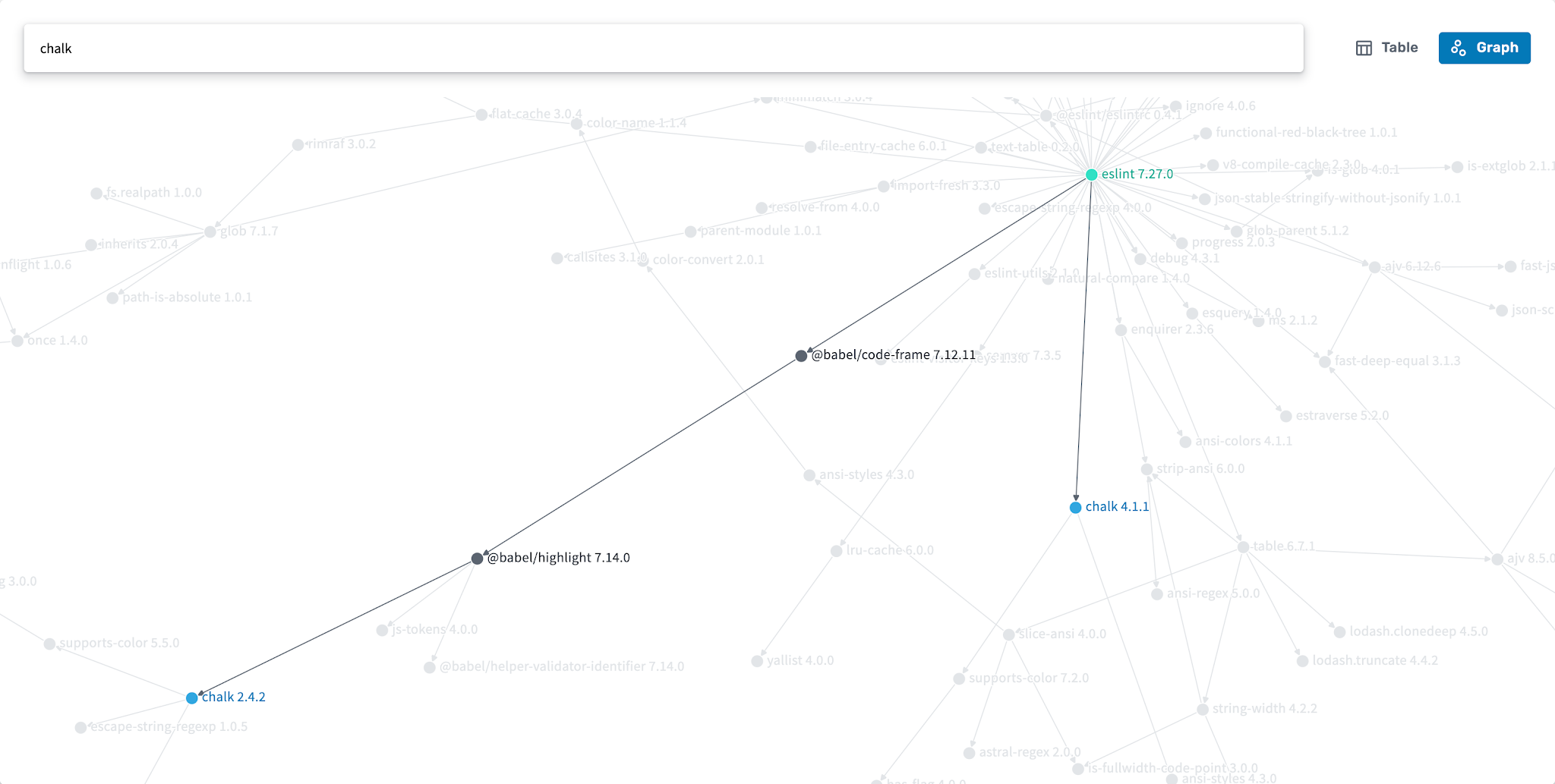

Filtered dependency graph showing how eslint 7.27.0 depends on chalk 2.4.2 and 4.1.1

This information can help visualize how software is put together, whether an update is worth doing, or how to fix a problem.

Today, Open Source Insights supports npm, Maven, Go modules, and Cargo. While we work on adding additional packaging systems, we want to hear from you: How could this data fit into your development workflow? What would make it more useful? You can reach the team at deps.dev to share your thoughts; we’ll be collecting feedback for the upcoming months and look forward to hearing your ideas on how best to improve supply-chain security.

Visit our website at deps.dev to try it out.

From the Google Cloud team: What this means for GCP’s open cloud

For users of open source software, this may be the first time you’re seeing dependency and vulnerability information in an organized and accessible way. If you’re using a managed service based on open source, it’s important to remember that you may not be affected by all vulnerabilities listed. Your provider may have taken steps to harden the products you use, and when a new vulnerability is disclosed, your provider may take responsibility for patching this on your behalf.Google Cloud follows both these steps to help users get the benefits of open cloud while prioritizing security. Multiple layers of hardening create defense-in-depth, which helps protect services like Google Kubernetes Engine (GKE), Cloud Run and Cloud Functions from a container escape vulnerability. For components that are the user’s responsibility, we’re constantly rolling out new services—like GKE Autopilot—that automate these responsibilities.

We’re committed to protecting our customers, both through our patch rewards program and the recently launched cyber insurance partnership, the Risk Protection Program, which moves from shared responsibility to shared fate. We look forward to bringing our customers new information on their open source dependencies.